Machine Learning: How It Works, Types & Applications (2026)

Every time Netflix surfaces a show you actually want to watch, or your bank blocks a suspicious charge before you even notice it, machine learning is working behind the scenes. These systems do not follow a rigid script written by a programmer. They learn from data, adapt to patterns, and make decisions on their own.

Machine learning has moved well beyond the research lab. In 2026, it underpins everything from the generative AI tools millions of people use daily to the fraud detection systems protecting billions of dollars in transactions. Yet despite its widespread impact, the concept itself remains misunderstood.

This guide breaks down what machine learning actually is, how it works under the hood, the different types you should know, where it shows up in the real world, and the challenges that still need solving. Whether you are a developer exploring a career pivot, a business leader evaluating AI investments, or someone who simply wants to understand the technology shaping modern life, this is where to start.

Table of Contents

Machine Learning at a Glance

- What is it? → A branch of AI where algorithms learn patterns from data to make predictions — without being explicitly programmed for each task.

- How does it work? → Models train on data, optimize for accuracy through repeated cycles, then apply learned patterns to new inputs.

- What are the main types? → Supervised learning (labeled data), unsupervised learning (unlabeled data), and reinforcement learning (trial-and-error rewards).

- Why does it matter in 2026? → ML powers GenAI chatbots, autonomous vehicles, medical diagnostics, fraud detection, and hundreds of everyday applications.

- Who should learn it? → Developers, data analysts, business strategists, and career changers — anyone willing to invest time in understanding data fundamentals first.

What Is Machine Learning?

Machine learning is a branch of artificial intelligence that uses algorithms to learn patterns from data, enabling systems to make predictions or decisions without being explicitly programmed for each specific task. As models process more data, they improve their accuracy over time — effectively “learning” from experience rather than following hard-coded rules.

The simplest way to understand the difference: traditional programming tells a computer what to do step by step. Machine learning shows a computer examples and lets it figure out the rules on its own.

Say you want to build a spam filter. In traditional programming, you would write hundreds of rules — “if subject line contains ‘FREE MONEY,’ mark as spam.” With machine learning, you feed the system thousands of emails already labeled as spam or not-spam, and it discovers the patterns that distinguish them. The result is a filter that can catch spam it has never seen before, including variations no human thought to write a rule for.

As UC Berkeley’s introduction to machine learning explains, the discipline sits at the intersection of computer science, statistics, and domain expertise — combining computational power with mathematical rigor to extract meaningful insights from raw data.

How Does Machine Learning Work?

At a high level, machine learning follows a repeatable cycle. The specifics vary by algorithm, but every ML system moves through the same core stages.

Step 1: Data Collection and Preparation

Everything starts with data. ML models need large, relevant datasets to learn from. This data could be structured (spreadsheets, databases) or unstructured (images, text, audio). Before training, the data must be cleaned — removing duplicates, handling missing values, and converting it into a numerical format the algorithm can process.

This step matters more than most people realize. Poor-quality data produces poor-quality models, regardless of how sophisticated the algorithm is. In practice, data scientists spend the majority of their time here — not on model building.

Step 2: Model Training and Optimization

During training, the algorithm processes the prepared data and looks for patterns. It makes predictions, compares those predictions against known outcomes (in supervised learning), and adjusts its internal parameters to reduce errors.

Think of it as a student taking practice exams. After each attempt, the student reviews mistakes and adjusts their approach. Over thousands of iterations, the model’s predictions become increasingly accurate.

The mathematical engine driving this is called optimization — typically gradient descent — which systematically minimizes the gap between predicted and actual results.

Step 3: Inference and Generalization

Once trained, the model is tested on new data it has never seen. This is the moment of truth: can it apply what it learned to real-world inputs? A model that performs well on training data but fails on new data has “overfit” — it memorized examples instead of learning generalizable patterns.

Successful generalization is what separates a useful ML model from an expensive experiment.

Types of Machine Learning

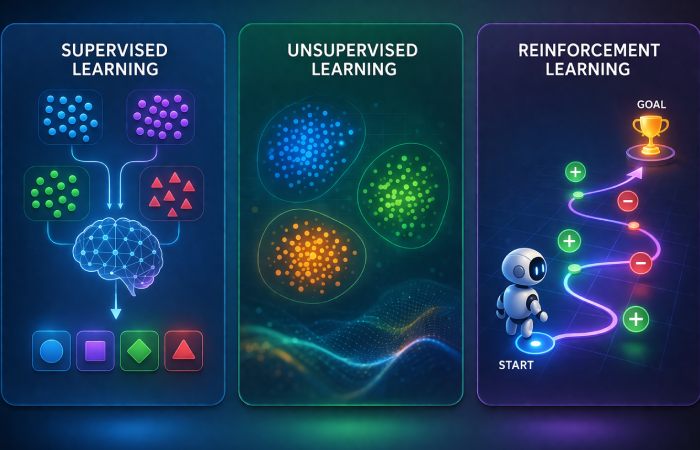

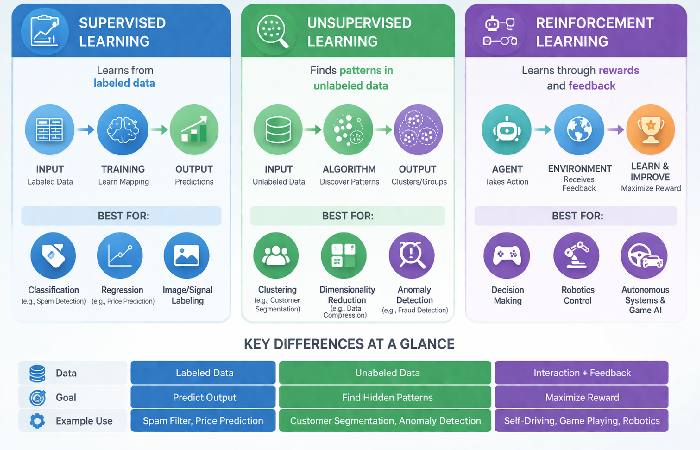

Machine learning is broadly divided into three main approaches. Each solves a fundamentally different kind of problem.

Supervised Learning

The most common type in production systems. The model trains on labeled data — input-output pairs where the correct answer is provided. It learns to map inputs to outputs and then applies that mapping to new, unseen data.

Key tasks:

- Classification: Assigning a category (email → spam or not spam)

- Regression: Predicting a number (house features → price estimate)

Real-world examples: Credit scoring, medical image diagnosis, speech recognition, sales forecasting.

Here is something most guides do not mention: supervised learning still accounts for the majority of real‑world ML applications where clear labels and prediction targets exist. The other types get more attention, but supervised learning is where most business value is generated today.

Unsupervised Learning

No labels, no correct answers. The model explores unlabeled data and discovers hidden patterns, groupings, or structures on its own.

Key tasks:

- Clustering: Grouping similar data points (customer segmentation by behavior)

- Dimensionality Reduction: Compressing complex data while preserving important structures

- Association: Finding relationships (customers who buy X also buy Y)

Real-world examples: Market segmentation, anomaly detection in network security, recommendation engines.

Reinforcement Learning

An agent learns by interacting with an environment and receiving feedback — rewards for correct actions, penalties for mistakes. Over time, it develops strategies that maximize cumulative reward.

Real-world examples: Game-playing AI (AlphaGo, OpenAI Five), robotic control systems, autonomous vehicle navigation, dynamic pricing.

Semi-Supervised and Self-Supervised Learning

Two hybrid approaches gaining traction:

- Semi-supervised learning combines a small amount of labeled data with a large pool of unlabeled data. Useful when labeling is expensive — like medical imaging, where only a specialist can annotate correctly.

- Self-supervised learning generates its own labels from the data itself. This is the technique behind large language models like GPT and Gemini, which learn language structure by predicting missing words in text.

Types of Machine Learning — Comparison

| Type | Data Used | Primary Goal | Best For | Real-World Example |

|---|---|---|---|---|

| Supervised | Labeled | Map inputs → outputs | Prediction and classification | Fraud detection, spam filters |

| Unsupervised | Unlabeled | Discover hidden patterns | Segmentation and exploration | Customer grouping, anomaly detection |

| Reinforcement | Reward signals | Maximize cumulative reward | Sequential decision-making | Robotics, game AI, autonomous driving |

| Semi-supervised | Mixed (small labeled + large unlabeled) | Leverage limited labels | Expensive-to-label domains | Medical imaging, speech recognition |

Machine Learning vs Deep Learning vs Artificial Intelligence

These three terms are related but not interchangeable. The clearest way to understand them is as nested layers.

Artificial Intelligence is the broadest concept — any system designed to simulate human intelligence. This includes everything from simple rule-based chatbots to advanced autonomous agents.

Machine Learning is a subset of AI. It is the specific approach where systems learn from data rather than following pre-written rules.

Deep Learning is a subset of machine learning. It uses multi-layered neural networks — architectures loosely inspired by the human brain — to handle complex, unstructured data like images, audio, and natural language text. The “deep” refers to the many hidden layers in these networks.

The critical practical difference: traditional ML often requires humans to manually select and engineer the features (variables) the model should pay attention to. Deep learning automates this feature extraction — the model learns what matters directly from raw data.

AI vs ML vs Deep Learning — Comparison

| Aspect | Artificial Intelligence | Machine Learning | Deep Learning |

|---|---|---|---|

| Scope | Broadest — any system mimicking human intelligence | Subset of AI — algorithms that learn from data | Subset of ML — layered neural networks |

| Data Type | Any (rules, logic, data) | Typically structured / tabular | Large volumes of unstructured data |

| Feature Engineering | Manual (rule creation) | Semi-manual (human-guided) | Automatic (learns features from raw data) |

| Hardware | Varies | Standard CPUs sufficient | Requires GPUs / specialized hardware |

| Complexity | Varies widely | Moderate | High |

| Best For | Broad problem-solving | Structured predictions, classification | Image recognition, NLP, generative AI |

Real-World Applications of Machine Learning

Machine learning is not a future concept — it is embedded in systems billions of people use every day.

Healthcare and Medical Diagnostics

ML models analyze medical images to detect tumors, identify skin conditions, and flag early signs of diseases like diabetic retinopathy. Drug discovery pipelines use ML to screen molecular compounds, reducing years of laboratory work.

Finance and Fraud Detection

Banks and payment processors deploy ML to analyze transaction patterns in real time. When a purchase deviates from your typical behavior, the model flags it as potentially fraudulent — often before you notice anything yourself.

E-Commerce and Recommendations

Product recommendations on Amazon, content suggestions on Spotify and Netflix, and personalized ad targeting all rely on ML models that learn individual preferences from browsing and purchase history.

Autonomous Vehicles and Robotics

Self-driving systems from companies like Waymo and Tesla use ML (specifically deep learning and reinforcement learning) to process sensor data, recognize objects, and make driving decisions in real time.

Natural Language Processing and Generative AI

Large language models — the technology behind ChatGPT, Gemini, and Claude — are built on deep learning architectures trained on massive text datasets. These systems generate human-quality text, translate languages, summarize documents, and power conversational AI assistants.

Popular Machine Learning Algorithms

You do not need to master every algorithm, but understanding the most common ones helps you evaluate which approach fits your problem.

- Linear Regression: Predicts continuous values. Simple, interpretable, and often the first algorithm to try for numerical predictions.

- Logistic Regression: Despite the name, used for classification. Estimates the probability of a binary outcome (yes/no, pass/fail).

- Decision Trees: Splits data into branches based on feature thresholds. Easy to visualize and interpret.

- Random Forest: An ensemble of decision trees that votes on the best prediction. More accurate than a single tree, less prone to overfitting.

- Support Vector Machines (SVM): Finds the boundary that best separates data classes. Effective for high-dimensional data.

- K-Means Clustering: Groups data points into K clusters based on proximity. The most common unsupervised algorithm.

- Neural Networks: Multi-layered architectures that power deep learning. The foundation for image recognition, NLP, and generative AI.

The right algorithm depends on your data type, problem complexity, and how interpretable the results need to be. Start simple — linear regression or decision trees — and increase complexity only when simpler models fall short.

Machine Learning Tools and Frameworks

Three frameworks dominate much of the practical ML ecosystem in 2026. Each serves a different purpose.

Scikit-learn, TensorFlow, and PyTorch

| Tool | Primary Focus | Learning Curve | Best For |

|---|---|---|---|

| Scikit-learn | Classical machine learning | Easy | Structured data, prototyping, beginners |

| PyTorch | Deep learning & research | Moderate | Research, custom architectures, flexibility |

| TensorFlow | Deep learning & production | Steeper | Enterprise deployment, scalable systems |

Practical guidance: If you are starting out, begin with Scikit-learn — it teaches ML fundamentals with a clean, consistent API. Move to PyTorch for research or custom deep learning projects. Choose TensorFlow when your priority is deploying models at scale in production environments.

In practice, many teams use multiple frameworks together — Scikit-learn for preprocessing and classical models, PyTorch or TensorFlow for the deep learning components of the same pipeline.

Machine Learning Challenges and Limitations

Machine learning is powerful, but it is not a universal solution. Understanding its limitations is as important as understanding its capabilities.

Data Quality and Availability

ML models are only as good as the data they learn from. Noisy, incomplete, or biased datasets produce unreliable models. Yet acquiring high-quality, well-labeled data remains expensive, time-consuming, and in sensitive domains like healthcare, subject to strict regulations.

No algorithm — no matter how advanced — can compensate for fundamentally flawed training data.

Model drift and ongoing monitoring

Even well‑trained models can become less accurate over time as real‑world data and user behavior change — a phenomenon known as model drift. To keep performance stable, teams need processes for regularly monitoring metrics in production, retraining models on fresh data, and updating them safely without disrupting downstream systems.

Algorithmic Bias and Fairness

When training data reflects historical biases, ML models learn and amplify those biases. Hiring algorithms have been shown to discriminate against certain demographics. Lending models have produced disparate outcomes across racial groups.

The NIST AI Risk Management Framework provides guidelines for identifying and mitigating these risks. Organizations adopting ML at scale are increasingly required to audit their models for fairness and document potential harms.

Interpretability and Explainability

Simple models like linear regression are easily interpretable — you can trace exactly how each variable influenced the prediction. Complex deep learning models, however, often function as “black boxes.” They deliver accurate results but offer little insight into why they made a specific decision.

This matters especially in high-stakes domains like healthcare, criminal justice, and finance, where stakeholders need to understand and trust the model’s reasoning before acting on its recommendations.

Machine Learning Trends in 2026

The ML landscape is evolving rapidly. According to McKinsey’s global AI adoption research, more than 70% of organizations now use AI or machine learning in at least one business function — up from roughly 50% just two years ago. Industry estimates suggest the global machine learning market was worth tens of billions of dollars in 2025 and is growing at a strong double‑digit rate year over year.

Key trends shaping the field right now:

- Generative AI and Large Language Models: Transformer-based models continue to advance, powering chatbots, code assistants, content generation, and multimodal AI systems that process text, images, and audio simultaneously.

- Agentic AI: ML-powered autonomous agents that can plan, reason, use tools, and execute multi-step workflows are moving from research prototypes into production environments.

- Autonomous and agentic systems: ML‑powered agents that can plan, call tools, and execute multi‑step workflows are moving from prototypes into customer support, operations, and FinOps use cases.

- Edge AI: Optimizing models to run locally on smartphones, IoT devices, and embedded systems — reducing latency and preserving privacy by keeping data on-device.

- Sustainable and efficient ML: Training large models consumes significant energy, so teams are prioritizing smaller, more efficient architectures, hardware acceleration, and edge deployment to cut costs and carbon impact while keeping performance high.

- MLOps Maturity: Organizations are investing in the infrastructure to deploy, monitor, version, and update ML models at scale — treating ML systems with the same operational rigor as traditional software.

- AI Governance and Regulation: The EU AI Act, NIST frameworks, and similar policy initiatives are driving demand for explainable, auditable, and bias-aware ML systems.

Common Mistakes When Getting Started with Machine Learning

- Jumping to complex models too early. Start with simple algorithms. If linear regression solves 80% of your problem, adding a neural network adds complexity without proportional value.

- Ignoring data quality. Spending weeks tuning a model on dirty data wastes time. Invest upfront in data cleaning, validation, and feature engineering.

- Overfitting the training data. A model that memorizes training examples instead of learning patterns will fail on real-world inputs. Always validate with a held-out test set.

- Choosing frameworks before understanding concepts. Learn what ML does before worrying about which tool to use. Framework choice should follow from your problem, not the other way around.

- Expecting instant results. ML is iterative. Production-quality models require multiple rounds of experimentation, evaluation, and refinement.

Who Should Learn Machine Learning — And Who Should Wait

Best for:

- Software developers wanting to add ML capabilities to their skill set

- Data analysts looking to move beyond spreadsheets into predictive modeling

- Business leaders evaluating AI investments and needing to ask the right questions

- Career changers with a quantitative background (math, statistics, engineering) seeking high-demand roles

Not for (right now):

- Those expecting to build production systems without understanding data fundamentals

- Anyone looking for a “no-code, instant-results” solution — ML requires patience and iteration

- Professionals who skip statistics and linear algebra and jump straight to deep learning without the mathematical foundation

The critical reality: most people using ML in 2026 will not train models from scratch. They will fine-tune pre-trained models, use AutoML platforms, or integrate ML services via APIs. Understanding the concepts matters more than memorizing every algorithm.

Final Verdict

Machine learning is not a buzzword — it is the foundational technology reshaping industries from healthcare and finance to entertainment and transportation. Understanding how it works, which types solve which problems, and where its limitations lie gives you a genuine advantage, whether you are building models yourself or making strategic decisions about AI adoption.

Start with supervised learning and structured data. Master the fundamentals before chasing the latest trends. And remember that the most sophisticated algorithm in the world cannot rescue poor-quality data.

The field is moving fast. But the core principles covered in this guide — learning from data, generalizing to new situations, and rigorously evaluating results — remain constant.

Frequently Asked Questions

Q: What is machine learning in simple terms?

A: Machine learning is a type of artificial intelligence that allows computers to learn from data and make decisions without being explicitly programmed for every scenario. Instead of following fixed rules, ML systems discover patterns on their own and improve as they process more information.

Q: What is the difference between AI and machine learning?

A: Artificial intelligence is the broad field covering any technology that simulates human intelligence. Machine learning is a specific subset of AI — the approach where systems learn from data rather than following manually written rules. All machine learning is AI, but not all AI is machine learning.

Q: What are the three main types of machine learning?

A: The three main types are supervised learning (training on labeled data to make predictions), unsupervised learning (finding hidden patterns in unlabeled data), and reinforcement learning (learning through trial-and-error with reward signals). Supervised learning is the most widely used in practice.

Q: Is machine learning hard to learn?

A: The fundamentals are accessible if you have a basic understanding of math (statistics, linear algebra) and can program in Python. The learning curve steepens with deep learning and advanced model architectures. Starting with Scikit-learn and simple datasets is the most practical path for beginners.

Q: What programming language is best for machine learning?

A: Python is the dominant language for machine learning, supported by a massive ecosystem of libraries including Scikit-learn, PyTorch, TensorFlow, NumPy, and Pandas. R is also used in statistical modeling, but Python is the industry standard for both research and production ML.

Q: How is machine learning used in everyday life?

A: Machine learning powers email spam filters, voice assistants (Siri, Alexa), Netflix and Spotify recommendations, Google search results, fraud detection on credit cards, navigation apps like Google Maps, and the generative AI chatbots millions of people use daily.

About Technologyies

Technologyies.com publishes practical, easy-to-understand content on health, technology, business, marketing, and lifestyle. Articles are based mainly on reputable, publicly available information, with AI tools used only to help research, organise, and explain topics more clearly so the focus stays on real‑world usefulness rather than jargon or unnecessary complexity.

Disclaimer

This article is for general information and education only and should not be taken as professional, legal, financial, or technical advice. While we aim to keep the content accurate and current, machine learning practices, tools, and regulations evolve quickly, and some details may change over time. Always verify critical information with up‑to‑date primary sources, official documentation, or qualified experts before making decisions or implementing any system based on what you read here.