Wiotra89.452n Model: Is It Real in the Philippines?

You searched for wiotra89.452n model. You found a stack of blog posts. Every single one says the same things — modular, scalable, real-time, flexible. None of them tells you where this term actually comes from. None links to a manufacturer, a research paper, or an official data sheet.

That’s a problem worth addressing upfront. This guide covers what the wiotra89.452n model is described as, how it functionally works according to the most consistent sources, where it applies in real workflows — including specific Philippine industry contexts — and what remains genuinely unverified.

You’ll get a clearer picture here than anywhere else ranking for this term. Not because we have access to information others don’t, but because we’re willing to be straight about the limits of what’s currently known.

Table of Contents

Quick Answers Before We Go Deep

- What is it? A framework described as an analytical and predictive modeling system — though no official primary source (vendor, paper, or spec sheet) has been publicly identified

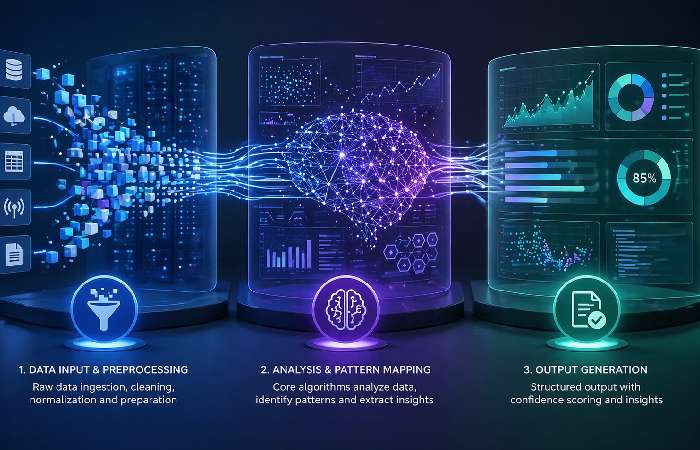

- How does it work? Three stages: raw data ingestion, pattern analysis via algorithms, and structured output generation with confidence scoring

- Is it legitimate? The functional concept is coherent and aligns with real data science principles — but claimed performance statistics circulating online aren’t independently verifiable

- Who uses it? Teams in finance, supply chain, manufacturing, and research are described as users in blog posts — but there are no independently verified case studies

- Philippines angle? BPO analytics teams, fintech risk modeling units, and agri-tech operations align well with its described capabilities

What Is the wiotra89.452n Model?

Here‘s the bare truth: this term is not found anywhere in any major specific registry, vendor index, or peer-reviewed text that is publicly available as of April 2026. What we have is a collection of blog posts someone wrote them in 2025 or 2026 that relate it in overlapping, sometimes conflicting, sets of terms.

The most commonly cited description frames it as “an analytical and predictive modeling framework” that “makes decisions and predictions about data” and is “intended to work with complicated datasets.” That’s the interpretation you’ll find on a large share of the pages currently ranking for this term.

A second group of sources describes it as “a structured system designed to support complex operations with precision and efficiency,” one that “offers flexible control and scalable performance” — positioning it closer to a system architecture than a pure analytics tool.

A third interpretation, from TechyFlavors’ March 2026 piece on a related term, frames similar identifiers as “digital identifiers that help precision data routing,” where “each part of an identifier contains a fraction of useful data.”

So which is it? Probably the first — the analytical framework framing is the most internally consistent and aligns best with the kind of technical workflows the term gets applied to. But you should know all three interpretations exist.

What’s Functionally Consistent Across All Sources

Whatever the exact origin, the more detailed descriptions of the wiotra89.452n model tend to agree on a few things:

- It processes data in stages — ingestion, analysis, output — rather than operating as a black box

- It’s designed for environments where data volumes and requirements change frequently

- Its internal design is “based on a modular structure,” where “each component performs a specific role while maintaining strong communication with the rest of the system”

- Real-time processing is a core design goal, not an add-on

- It’s positioned as a replacement for “traditional systems that rely on rigid configurations”

Those attributes aren’t invented. They map onto real patterns in modern data architecture and predictive modeling design. Whether the specific name “wiotra89.452n” refers to a proprietary implementation, an experimental identifier, or a conceptual label — the functional description is coherent.

What Remains Unverified

Frankly, the one that gets most hands on traffic is quite frankly “Execution is associated with a 30% increase in processing speed, a 25% increase in predictive accuracy, with a 2023 Date in the Data Science Journal” that hasn‘t been independently verified. Treat any specific performance figures attached to this model name with appropriate skepticism until you can trace them to a verified primary source.

No official documentation, data sheet, or vendor page has been identified for this model. If you encountered this term in a log file, invoice, or system dashboard, trace it back to the software or platform that generated it — that’s your actual primary source.

How the wiotra89.452n Model Works

Set aside the name for a moment. The operational process described across sources is a recognizable three-phase pipeline. Here’s how each stage works.

Phase 1 — Data Input and Preprocessing

Raw data arrives first. The model takes in raw data, then launches into preprocessing and cleaning. “The raw data goes through different missing value techniques and the data is normalized in order to enhance model performance.”

This is standard data pipeline practice — nothing exotic here. Missing value imputation, outlier handling, normalization (scaling features to a common range), and deduplication all happen before any analysis begins. The model’s contribution at this stage is an automated approach to these tasks rather than requiring manual intervention.

What makes this phase consequential? Bad preprocessing produces bad outputs. Always. This is the stage where data quality dependency is highest — and it’s a real limitation we’ll cover later.

Phase 2 — Analysis and Pattern Mapping

Clean data moves into the analysis engine. The model engages the data “with the core algorithms,” then “identifies various patterns and pulls different trends, anomalies, maps, and relationships.”

This is where the predictive modeling does its work. The framework applies pattern recognition techniques — likely ensemble methods or neural-adjacent approaches, based on context clues in how sources describe it — to surface structure in data that wouldn’t be obvious through manual review.

The model “employs machine learning and neural network algorithms for better pattern recognition and prediction analysis” and “offers a support for real time process owing to low latency and easy access to the data for quick decision making.”

Real-time here means the model doesn’t wait for a full dataset to arrive. When “compared with previous models, this framework demonstrates advanced capabilities to process and handle data in real time,” meaning it “can accept live streaming of data and provide outputs on the spot — particularly important when time to produce stated outputs is tight.”

Phase 3 — Output Generation and Confidence Scoring

The model “launches into output generation,” where “the data can be received in different user-preference formats” — “visual formats, different number formats, or forecasting formats.” Output also carries “a measure of confidence” that “encapsulates how reliable the constructed model data is.”

The confidence score is a practical feature. It lets analysts and decision-makers know whether to act on an output immediately or flag it for review. High-confidence outputs go to dashboards. Low-confidence outputs go to a review queue. That’s a workflow integration point, not just a technical stat.

Key Features and Technical Specifications

The model “provides a range of advanced features that improve its capabilities and usability for data analytics” built “on a modular architecture that allows integration with existing systems.”

Here’s a structured breakdown of what sources claim about the model — with clear flags for what appears across multiple descriptions versus what only comes from a single blog and remains unverified:

| Feature | Description | Verification Status |

|---|---|---|

| Modular architecture | Component-based design; each module operates independently but communicates with the system | ✅ Consistent across sources |

| Real-time processing | Accepts live data streams; outputs on-the-spot | ✅ Consistent across sources |

| Scalability | Described as handling varied data volumes | ✅ Consistent across sources |

| AES-256 encryption | Security protocol for data at rest and in transit | ⚠️ Claimed — not independently verified |

| 10GB–10TB data volume range | Scalability specification | ⚠️ Claimed — single source; unverified |

| 30% processing speed improvement | Cited performance metric | ❌ Unverified — primary source not confirmed |

| Hadoop/Spark compatibility | Integration with major data platforms | ⚠️ Claimed — plausible but unverified |

| Confidence scoring on outputs | Reliability signal per output batch | ✅ Functionally described across sources |

Modular Architecture — Why It Matters in Practice

Modular: This allows you to change one part of the system without having to renew the whole. This structure “permits various parts to operate together efficiently” which “advances total productivity and minimizes delays in operation”.

For teams managing production pipelines, this matters. You can swap out a data ingestion module, test a new normalization approach, or add a new output format without touching the core analysis engine. That’s meaningful operational flexibility — it’s not just a marketing claim.

Scalability and Configuration

In contrast to “rigid configurations” of conventional systems, the wiotra89.452n provides a model by which “it provides flexible control and scalable performance” making it “fit for environments that change often”.

Practically, that means – weight scaling of the infrastructure rather than full infrastructure overhaul as data increases.

Where the wiotra89.452n Model Is Applied

These application areas are consistent across the top sources. They’re presented here as “described use cases” — not confirmed case studies.

Financial Services and Risk Modeling

Sources claim that financial institutions use this model “in assessing risks and predicting trends in the market,” with its “ability to process multiple variables simultaneously” making it “useful in constructing portfolio management and investment strategy.

For Philippine banks and fintech companies — an accelerating sector since the BSP’s digital banking push — a framework that can process transaction anomalies in real time has direct applicability to fraud detection and credit risk scoring.

Supply Chain Optimization

Blog posts state that the model “has been utilized by many businesses to anticipate fluctuations in demand, streamline supply chains, and locate supply chain obstructions.” When “unpredicted interferences” arise, “the ability to process in real time assists in making modifications to the variables.”

Managing demand volatility from suppliers to port clearances to inventory levels is a common challenge for Philippine manufacturing and logistics companies, especially in the CALABARZON and Central Luzon industrial corridors. To do this effectively, the company needs to identify patterns across data streams as they happen.

Manufacturing and Quality Control

Many industrial systems “require dependable processing and monitoring,” and sources state that the model “provides consistent control and helps maintain operational efficiency.” Factories and production facilities “often benefit from its stable performance and scalable design.

Research and Data Science

Development teams use the model “when testing new systems and processes.” The fact that it provides a “structured framework helps produce accurate results and predictable behavior,” as well as the fact that “engineers can run tests and simulations without major interruptions.”

Philippines-Specific Use Cases 🇵🇭

Most pages currently ranking for this term don’t discuss Philippine contexts at all. That’s the gap this guide is explicitly filling.

- BPO and Analytics: The Philippines is the global BPO capital. Analytics-as-a-service is a growing BPO vertical. A predictive modeling framework capable of real-time client data processing and pattern recognition aligns directly with what enterprise analytics BPO teams need.

- Fintech and Digital Banking: WWith multiple BSP‑licensed digital banks operating alongside large e‑wallet ecosystems like GCash and Maya, local fintech and payments companies generate massive transaction and behavioral datasets, as reflected in the broader context around digital banks in the Philippines covered by BSP’s evolving digital‑bank landscape.

- Agriculture and Agri-Tech: Philippine agriculture faces crop yield volatility, supply chain bottlenecks, and weather-dependent demand shifts. A framework that processes multi-variable data in real time — weather patterns, market prices, logistics data — has clear application for agri-tech platforms.

- Academic and Research Institutions: Philippine universities (UP, DLSU, Ateneo) are expanding data science programs. A conceptual framework like wiotra89.452n — whether or not it’s a named commercial product — fits well as an architectural reference model for thesis work and research methodology.

wiotra89.452n Model vs. Comparable Frameworks

Here’s a practical side‑by‑side view against more familiar approaches, so you can see where it might make sense in a real stack.

| Feature | wiotra89.452n | Random Forest | XGBoost | Traditional BI |

|---|---|---|---|---|

| Real-time processing | ✅ Yes | ⚠️ Limited | ⚠️ Limited | ❌ No |

| Modular architecture | ✅ Yes | ❌ No | ❌ No | ⚠️ Varies |

| Pattern recognition | ✅ Yes | ✅ Yes | ✅ Yes | ❌ No |

| Interpretability | ⚠️ Unclear | ✅ High | ⚠️ Moderate | ✅ High |

| Setup complexity | ⚠️ Moderate | ✅ Low | ⚠️ Moderate | ✅ Low |

| Verified benchmarks | ❌ Not confirmed | ✅ Extensive | ✅ Extensive | ✅ Extensive |

| Best for | Complex real-time multi-variable workflows | Classification, tabular data | Competitive ML, structured data | Reporting, dashboards |

| Philippines fit | 🟡 Strong conceptual fit; deployments not documented | ✅ Established | ✅ Established | ✅ Established |

When the wiotra89.452n Framework Concept Outperforms Alternatives

The described architecture has genuine advantages in scenarios where you need a pipeline that handles data ingestion, cleaning, analysis, and output in a single integrated flow — rather than stitching together separate tools for each stage.

If you’re managing a workflow that generates streaming data at irregular intervals — real-time transaction logs, IoT sensor feeds, or live inventory signals — and you need pattern outputs with confidence scoring attached, the pipeline described for this model matches that need well.

When You Should Consider Other Tools Instead

Random Forest and XGBoost are both extensively verified, open-source, and have massive support ecosystems. If your use case involves standard classification or regression on tabular data, those are better-supported choices — with years of benchmarks, community support, and library documentation behind them.

Traditional BI tools (Power BI, Tableau, Looker) are more suitable if your team requires dashboards/ reporting based on raw data analysis. No learning curve, no algorithm configuration, dumping ground for understood result formats.

The honest answer: Until wiotra89.452n model has a verified primary source, documented implementation, and independent benchmarks, you’re making a judgment call based on the functional description rather than proven performance. For production-critical workloads, that’s a meaningful risk to weigh.

Advantages of the wiotra89.452n Model

Based on the most consistent descriptions across top sources, here’s what works in its favor:

- Integrated pipeline: Data ingestion, preprocessing, analysis, and output happen in one system rather than requiring multiple tools stitched together. That reduces integration overhead and failure points.

- Real-time capability: Most traditional analytical tools process in batches. The model “supports real-time data processing with low latency, facilitating swift decision-making in dynamic environments.” For time-sensitive operational decisions, that matters.

- Confidence scoring: Most frameworks give you an output. This one reportedly attaches a reliability signal to each output. That’s not a standard feature in simpler frameworks — and it’s genuinely useful for risk-aware workflows.

- Adaptability: One source describes it as especially useful for “people who do repeat work with slight variations” — “the kind of work where small inefficiencies add up over time.” That’s a precise use-case fit, not a vague claim.

- Scalable design: Growing data volumes don’t require architectural overhauls — just resource scaling.

Limitations and Honest Considerations

This section is the most important one on this page. No competing article covers limitations with any real depth.

Learning Curve and Onboarding

Organizations “must ensure employees are trained,” because “employees trained to understand the model’s use and limitations saves the organization time and money on training.”

But that advice is circular. Without official documentation, onboarding teams to this model means training on descriptions from blog posts — not structured curriculum. That’s a real implementation risk.

Data Quality Dependency

This is the most universal limitation in any predictive modeling framework, and it applies here without exception. Like any serious AI or statistical system, this kind of model is only as reliable as the data it receives; accuracy, completeness, and clear documentation are essential for trustworthy outputs, which closely matches the emphasis on validity, reliability, and explainability in NIST’s AI Risk Management Framework trustworthiness characteristics. No framework compensates for low-quality input data at scale. If your organization’s data pipelines aren’t mature — with proper labeling, consistent schemas, and governance policies in place — implementing a sophisticated analytical framework before solving data quality issues is the wrong order of operations.

Computational Requirements

Real-time processing at scale is resource-intensive. Whether your infrastructure runs on-premise or in the cloud, real-time data streaming and low-latency pattern recognition require dedicated compute capacity. Budget planning for this is not optional.

Performance Claims That Can’t Be Independently Verified

This one requires blunt clarity. The specific performance metrics circulating online — processing speed improvements, predictive accuracy gains — don’t trace back to a verifiable study or primary source. One source notes that “success requires quality data, meticulous execution, and well-trained people”3 — that’s honest framing. The percentages attached to those outcomes aren’t.

Plan your expectations around the functional framework description, not the unverified numbers.

As a safeguard, this guide follows search best practices that emphasize people‑first, clearly sourced content rather than unverified or keyword‑stuffed claims, as outlined in Google Search Central’s people‑first content guidance.

How to Get Started With the wiotra89.452n Model

This section assumes you’ve identified a legitimate implementation of this framework in a software environment, tool, or system you’re evaluating. If you’re working from the functional concept alone, treat these as architectural guidelines.

Step 1 — Assess Your Data Environment

Before anything else, answer these questions:

- What’s your current data volume, and how fast does it grow?

- Is your data structured or unstructured?

- Do you need real-time outputs, or will batch processing work for your use case?

- What downstream systems will consume the model’s output?

The environment “should be checked before installation begins,” including “verifying compatibility and resource availability,” since “proper preparation reduces the chance of configuration problems later.”

Step 2 — Configure Core Components

Once installed, the system is “configured according to operational needs,” with “different settings” allowing users to “optimize performance.” The model “supports flexible configuration, making it suitable for different setups.”

Practically: specify your data entry schemas, define normalization parameters, define output formatting options (visual, numeric, forecast), define confidence levels for review vs. auto-action.

Step 3 — Test, Validate, and Benchmark

Testing “confirms that the system operates correctly,” ensuring “all components function as expected.”

Run your test dataset through the pipeline first. Validate outputs against known results before exposing live data to the model. Establish baseline benchmarks so you can measure whether performance improves or degrades over time.

Common Implementation Mistakes to Avoid

- Skipping the data quality audit before setup — this is the most common and most costly mistake

- Expecting real-time performance without provisioning real-time infrastructure

- Using unverified performance statistics as project success benchmarks

- Deploying without a named team member responsible for ongoing model monitoring

- Treating confidence scores as binary (act / don’t act) rather than as a gradient requiring human judgment

Who This Is For — and Who Should Look Elsewhere

Strong fit for:

- Data-heavy operational teams in finance, supply chain, and manufacturing

- Philippine BPO analytics divisions managing multi-client data workflows

- Data scientists evaluating multi-stage pipeline frameworks

- Research teams working with streaming or time-series data

- IT architects designing scalable, modular data systems

Not a strong fit for:

- Teams that need production-proven tools with verified benchmarks and community support — use Random Forest, XGBoost, or established BI tools instead

- Organizations with immature data infrastructure — solve the data quality problem first

- Small teams with simple, low-volume data needs — the setup complexity isn’t worth it at that scale

- Anyone who needs official vendor support, SLAs, or documented compliance certifications — none of those are currently available for this model by name

Final Verdict

The way online sources describe the wiotra89.452n model adds up to a coherent, functionally sound approach to multi-stage predictive data modeling. The pipeline architecture — ingestion, preprocessing, pattern analysis, confidence-scored output — maps onto real data science principles. The modular, scalable design philosophy reflects legitimate modern system engineering practice.

What it doesn’t have — yet — is a verified primary source. No manufacturer‘s page, no reference in the official specification, no independently verified benchmarks. That isn‘t a reason to ignore the concept, it‘s a reason to take implementation seriously, with the appropriate amount of caution.

For readers in the Philippines: the functional framework is worth understanding whether or not you ever implement something called “wiotra89.452n” specifically. The underlying architecture concepts — modular design, real-time processing pipelines, confidence-scored analytical output — apply directly to the fintech, BPO, and manufacturing sectors driving Philippine digital transformation.

Understand the concept. Verify the specific implementation before deploying it.

Frequently Asked Questions

Q: What is the wiotra89.452n model used for?

A: It’s described primarily as an analytical and predictive modeling framework — designed to ingest raw data, run pattern recognition analysis, and generate structured outputs with confidence scoring. Financial risk modeling, supply chain optimization, quality control, and research workflows are the most consistently cited applications in available online descriptions.

Q: Is the wiotra89.452n model a real product?

A: That’s the right question — and most pages skip it. The functional concept is coherent and aligns with real data science architecture. But no manufacturer, official documentation, or verified primary source has been publicly identified. If you encountered this term in a software environment, trace it back to that specific platform.

Q: How does wiotra89.452n differ from frameworks like Random Forest or XGBoost?

A: Random Forest and XGBoost are open-source, extensively benchmarked, and have strong community support. The wiotra89.452n model’s differentiators — if the descriptions are accurate — are integrated real-time streaming, confidence scoring on outputs, and a fully modular pipeline architecture. But those frameworks have verified performance records. This one doesn’t, yet. Use the comparison table above to weigh trade-offs for your specific use case.

Q: What are the main limitations of the wiotra89.452n model?

A: Three are consistent across sources. First, it’s heavily dependent on input data quality — low-quality data produces unreliable outputs regardless of the framework. Second, real-time processing demands real-time infrastructure investment. Third — and uniquely for this model — performance claims circulating online haven’t been independently verified. Plan around the architecture, not the unverified numbers.

Q: Is the wiotra89.452n model suitable for Philippine businesses?

A: The described capabilities align well with Philippine industry contexts — particularly BPO analytics operations, BSP-regulated fintech companies handling real-time transaction monitoring, manufacturing operations in CALABARZON, and agri-tech platforms managing supply chain variability. None of those alignments require the term to have a verified primary source. The framework concept applies regardless.

Q: How do I get started with the wiotra89.452n model?

A: Start with your data environment assessment — volume, structure, real-time requirements, and downstream system compatibility. Configure input schemas and normalization parameters before running live data. Test against known outputs first. And set your internal success benchmarks based on your actual data quality and infrastructure capacity — not on the unverified performance figures floating around online.